Published April 21, 2026

What Is Private AI? The Complete Enterprise Guide for 2026

6 min read

Pratibha Sharma

Marketing & Communication

Have questions or want a demo?

We're here to help! Click the button below and we'll be in touch.

Get a Demo

AI Summary by QAnswer

Private AI is rapidly becoming a strategic requirement for organisations that cannot afford to send their most sensitive data to third-party cloud AI providers. Whether you operate in financial services, healthcare, the public sector, or any regulated industry, the question is no longer whether to adopt AI — it is how to do so without compromising data sovereignty.

This guide explains what private AI means, why enterprises are prioritising it in 2026, and what a production-ready private AI deployment actually looks like.

What Is Private AI?

Private AI refers to AI systems — particularly large language models (LLMs) and AI assistants — that are deployed, operated, and controlled entirely within the organisation's own infrastructure, rather than via a third-party cloud service. In a private AI setup:

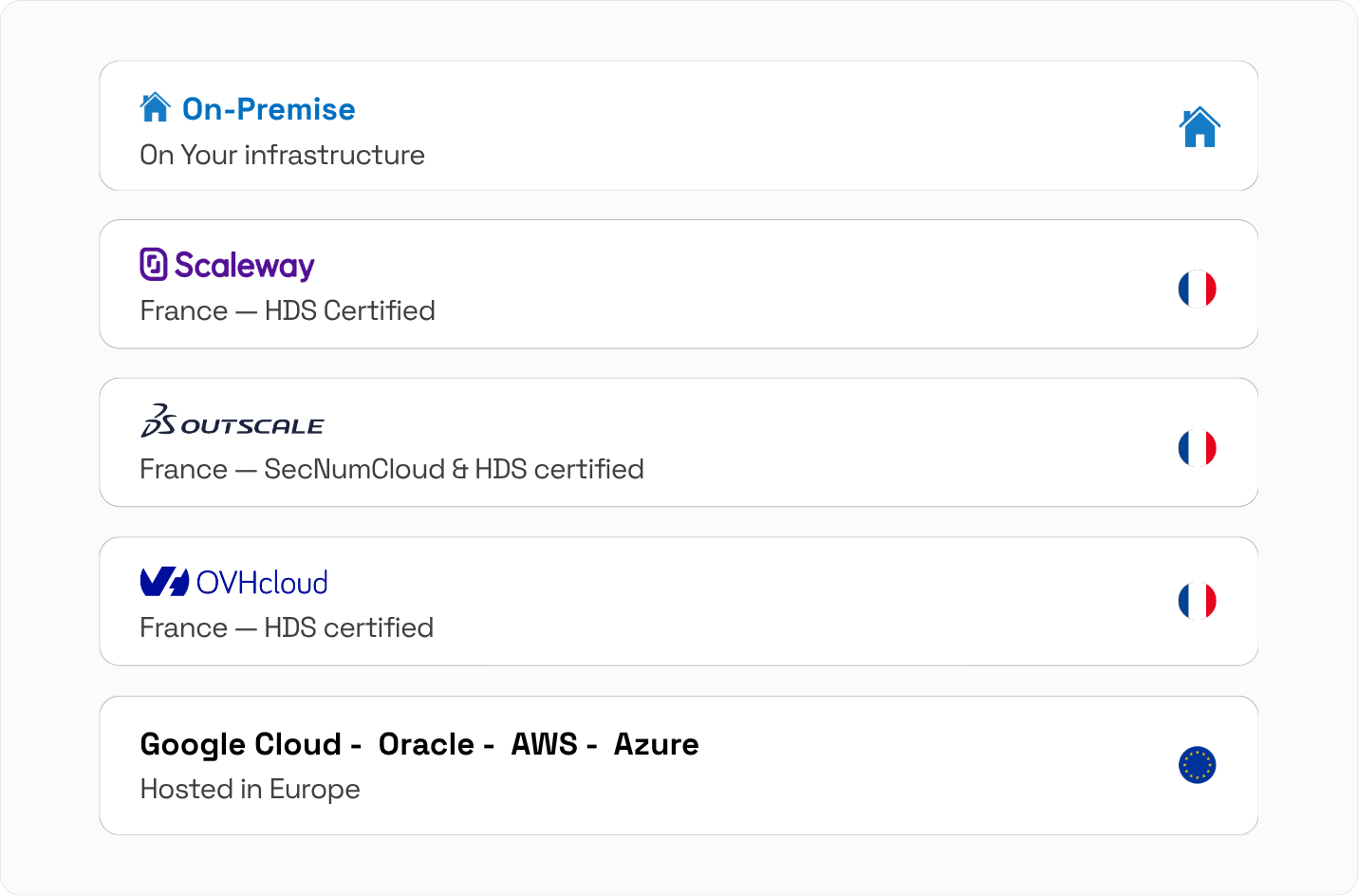

- The AI model runs on infrastructure you own or lease (on-premise servers, private cloud, or dedicated cloud tenancy)

- Your data is never sent to an external AI provider's servers for processing

- You control the model version, update cadence, and access permissions

- All audit logs and conversation data remain within your security perimeter

Private AI is often used interchangeably with sovereign AI (particularly in a national or regulatory context) and on-premise AI (emphasising the physical infrastructure location). All three refer to the same core principle: your data stays under your control. Learn more about our approach to data sovereignty.

Why Enterprises Need Private AI in 2026

Regulatory Compliance

GDPR, HIPAA, NIS2, DORA, and sector-specific regulations impose strict requirements on where and how personal and sensitive data is processed. Many third-party AI cloud providers process data in the United States or other jurisdictions, creating compliance risk for European and regulated organisations. Private AI eliminates this risk by design.

Intellectual Property Protection

When you send internal documents, contracts, source code, or strategic plans to a cloud AI service, you are making a legal and practical bet that the provider's data handling is secure. Private AI removes that bet entirely: your intellectual property never leaves your environment.

Data Breach Risk Reduction

Third-party AI providers are attractive targets for attackers precisely because they aggregate sensitive data from thousands of enterprise customers. A private AI deployment limits your blast radius — a breach of the provider does not expose your data because your data was never there.

Auditability and Governance

Regulated industries require detailed records of what decisions were made, based on what data, by what system. Private AI deployments give you full control over logging, retention, and audit trail configuration — something cloud providers often cannot guarantee to the required standard. See how QAnswer handles governance and access control in practice.

Customisation and Fine-Tuning

With access to the underlying model and infrastructure, private AI deployments can be fine-tuned on domain-specific data — significantly improving accuracy for specialised use cases in legal, medical, engineering, and finance. This is especially relevant when rethinking how enterprise LLMs should be deployed.

Private AI vs Cloud AI: A Comparison

| Dimension | Cloud AI (SaaS) | Private AI (On-Premise / Private Cloud) |

|---|---|---|

| Data residency | Provider's data centres | Your infrastructure |

| GDPR / regulatory risk | Depends on provider contracts | Fully controlled by you |

| Setup complexity | Low (SaaS) | Higher (requires infrastructure) |

| Customisation | Limited | Full access to model and configuration |

| Auditability | Dependent on provider | Full control |

| Scalability | Elastic, instant | Constrained by own infrastructure |

What a Private AI Deployment Looks Like in Practice

Infrastructure

A private AI deployment typically runs on GPU-accelerated servers hosted either in the organisation's data centre or in a dedicated private cloud tenancy. Modern open-weight LLMs (Llama 4, Mistral, Falcon) can be run on mid-range GPU hardware for most enterprise Q&A use cases, without the need for data centre-scale compute.

Retrieval-Augmented Generation (RAG)

Most enterprise private AI deployments combine a private LLM with a retrieval layer that grounds answers in the organisation's own documents and data. This RAG architecture produces accurate, cited answers from internal content, without the hallucination risk of an LLM operating from general training data alone.

Integration with Internal Systems

A production private AI deployment integrates with the systems where your knowledge lives: SharePoint, Confluence, SQL databases, ERPs, and email archives. Users interact through familiar interfaces — a website chatbot, a Teams bot, a Slack integration — without any of that data leaving the corporate network.

Who Needs Private AI?

- Financial services — Banks, insurers, and asset managers operating under GDPR, DORA, and sector-specific supervisory requirements.

- Healthcare and life sciences — Hospitals and pharmaceutical companies handling patient data and clinical trial records under HIPAA and EU health data regulations.

- Public sector and defence — Government agencies and defence contractors for whom data sovereignty is a national security matter.

- Legal and professional services — Law firms and consultancies where client confidentiality obligations prevent sending case materials to third-party servers.

- Industrial and manufacturing — Companies with proprietary design files, process documentation, and trade secrets that represent core intellectual property.

How QAnswer Delivers Private AI for the Enterprise

QAnswer is built from the ground up as a private, sovereign AI platform. It is designed specifically for organisations that need the power of modern LLMs combined with the absolute assurance that their data never leaves their infrastructure.

- Full on-premise deployment — QAnswer runs entirely on your own servers or private cloud. No data is ever sent to a third-party AI provider. This includes the LLM inference layer, the retrieval pipeline, and all conversation logs.

- ISO 27001 certification — QAnswer is certified to the international standard for information security management, giving you and your auditors the assurance they require.

- GDPR-by-design architecture — Data minimisation, purpose limitation, and access control are embedded in the platform's architecture — not bolted on as an afterthought.

- 100+ data source connectors — Connect to your internal knowledge wherever it lives: SharePoint, Confluence, Google Drive, Notion, SQL databases, REST APIs. All retrieval happens within your security perimeter.

- Choice of LLM — QAnswer supports multiple open-weight models (Llama, Mistral, Falcon) as well as private deployments of commercial models. You choose the model that best fits your accuracy, language, and licensing requirements.

- Multi-channel deployment within your perimeter — Website chatbot, Microsoft Teams bot, Slack integration, REST API — all served from your own infrastructure, with no external cloud dependencies.

Conclusion

Private AI is no longer the exclusive domain of defence contractors and central banks. In 2026, any organisation that handles sensitive data — customer records, financial information, clinical data, intellectual property — should be evaluating private AI as their deployment model of choice.

The question is not whether you can afford to invest in private AI. It is whether you can afford not to — given the regulatory, reputational, and competitive risks of sending your most sensitive knowledge to third-party cloud providers. QAnswer makes enterprise-grade private AI accessible, deployable, and genuinely useful — grounded in your data, running in your infrastructure, answering accurately and securely.

Ready to evaluate a private AI deployment for your organisation? Talk to the QAnswer team — and see what sovereign, knowledge-grounded AI looks like in your environment.

Back to Blog

The AI platform that works.

Try for free today