Published April 21, 2026

Generative AI in Customer Service: Complete Guide for 2026

6 min read

Samir Yacini

Growth Marketer

Have questions or want a demo?

We're here to help! Click the button below and we'll be in touch.

Get a Demo

AI Summary by QAnswer

Generative AI is no longer a future consideration for customer service teams — it is already reshaping how the world's leading organisations handle support at scale. From writing first-draft replies to powering fully autonomous support agents, generative AI in customer service is delivering measurable gains in efficiency, satisfaction, and cost reduction. For a tool-by-tool breakdown, see our guide to the best AI tools for customer support.

This guide explains what generative AI customer service looks like in practice, the specific use cases that deliver the most value, and how to implement it safely without sacrificing accuracy or customer trust.

What Is Generative AI in Customer Service?

Generative AI refers to AI models — primarily large language models (LLMs) like GPT-4, Claude, and Llama — that can generate human-quality text in response to a prompt. In a customer service context, this capability is used to:

- Draft responses to customer emails and tickets

- Power conversational chatbots that handle queries end-to-end

- Summarise long conversation threads for faster agent handoffs

- Generate knowledge base articles from existing support documentation

- Analyse customer sentiment and predict escalation risk

The key distinction from rule-based chatbots is flexibility. Generative AI handles novel questions it has never encountered before, adapting its language and tone to match the context of each interaction.

Key Use Cases for Generative AI in Customer Service

Automated Tier-1 Support

Generative AI chatbots handle your most repetitive queries — order status, password resets, billing questions, policy explanations — without any human involvement. Unlike keyword-based bots, they understand natural language and follow multi-turn conversations fluently. Explore the full range of chatbot use cases for enterprise automation.

Agent Assist and Response Drafting

For queries that escalate to a human agent, generative AI drafts the first response based on the customer's message and relevant knowledge base content. Agents review, edit, and send — reducing average handle time by 30–50% in documented enterprise deployments.

Conversation Summarisation

When a customer has bounced across multiple channels or agents, generative AI instantly summarises the full history so the next agent picks up with complete context — eliminating the need for customers to repeat themselves.

Knowledge Base Generation and Maintenance

Generative AI scans conversation logs, identifies frequently asked questions, and automatically drafts new knowledge base articles — turning support conversations into living documentation.

Multilingual Support at Scale

Modern LLMs understand and generate text in 30+ languages without requiring separate models or human translators. One generative AI deployment serves customers across all geographies simultaneously.

Voice of the Customer Analysis

Generative AI analyses thousands of support interactions to identify patterns: which product features drive the most confusion, which agent workflows create bottlenecks, and which customer segments are at highest churn risk.

Benefits of Generative AI in Customer Service

- Speed — Average first response time drops from hours to seconds for a large proportion of queries.

- Consistency — AI always follows your brand guidelines, tone, and compliance requirements.

- Scalability — Handle 10× more queries with the same headcount during peak periods or product launches.

- Cost reduction — Gartner estimates organisations deploying conversational AI reduce cost per contact by 30–40%.

- 24/7 availability — Generative AI operates continuously, serving customers in every time zone.

Risks and How to Manage Them

Hallucination

Generic LLMs can generate confident-sounding but factually incorrect responses — a serious risk in customer service contexts. The solution is retrieval-augmented generation (RAG): instead of generating answers from the model's training data alone, the AI retrieves relevant content from your verified knowledge base before composing a response. Every answer is grounded in facts you control.

Data Privacy

Sending customer data to third-party LLM APIs raises GDPR and sector-specific compliance concerns. Organisations in finance, healthcare, and the public sector should look for on-premise or private-cloud deployment options where data never leaves their infrastructure. Learn more about what private AI means and why it matters for regulated organisations.

Brand and Tone Consistency

Without guardrails, a generative AI system may drift from your brand voice. Implement tone guidelines, system prompts, and a human review layer for sensitive interactions.

How to Implement Generative AI for Customer Service

- Audit your most frequent query types — Identify the top 20% of queries that account for 80% of volume.

- Build a grounded knowledge base — Connect your AI to verified, up-to-date internal content: FAQs, product documentation, policies.

- Choose a deployment model — Cloud-based for speed and flexibility; on-premise for maximum data control in regulated environments.

- Define escalation rules — Specify the conditions under which the AI hands off to a human: topic sensitivity, sentiment thresholds, or specific account flags.

- Pilot with a subset of channels — Launch on email or chat before scaling to all touchpoints.

- Measure and iterate — Track deflection rate, CSAT, first-contact resolution, and average handle time. Use conversation logs to continuously refine your knowledge base.

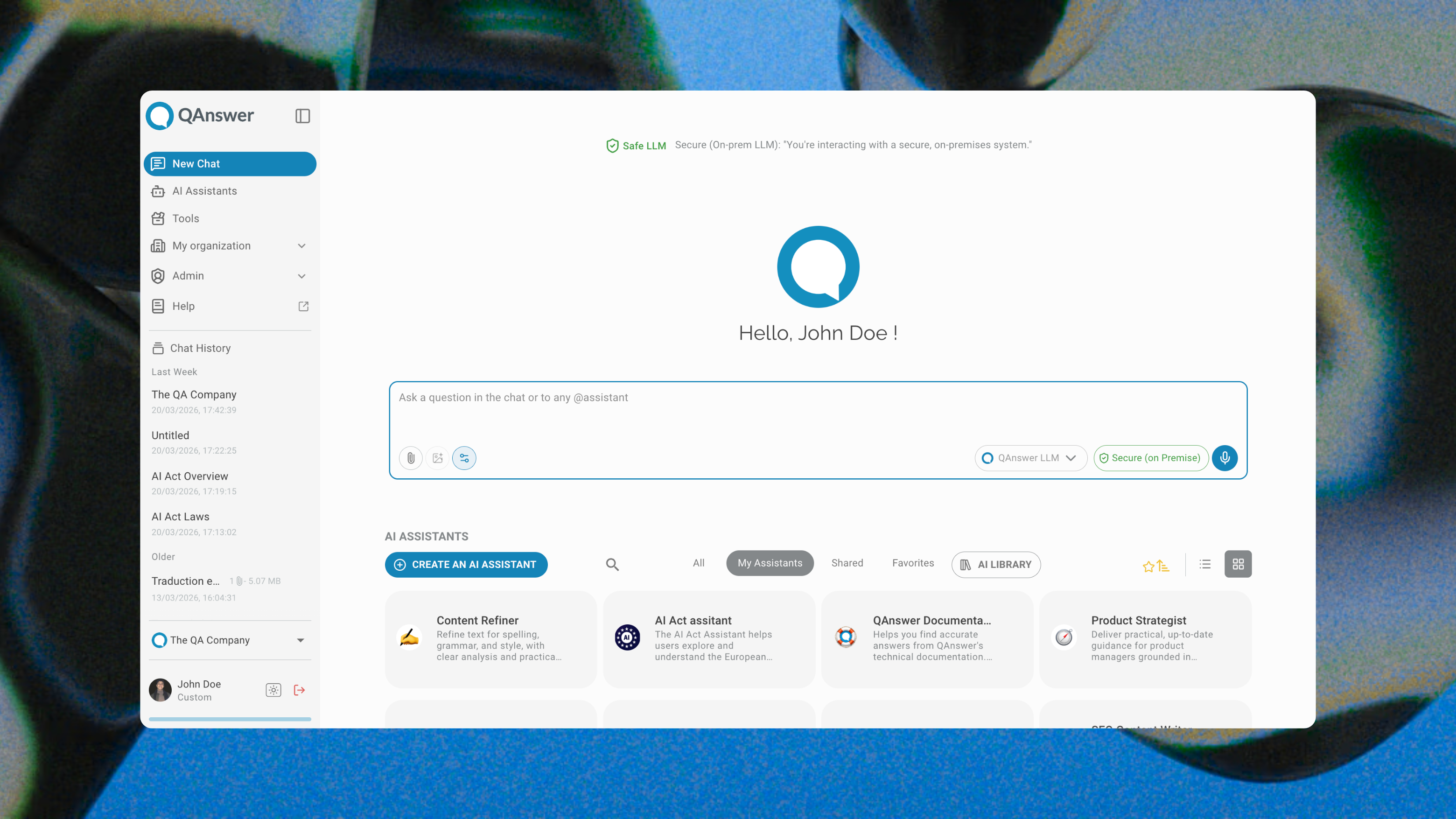

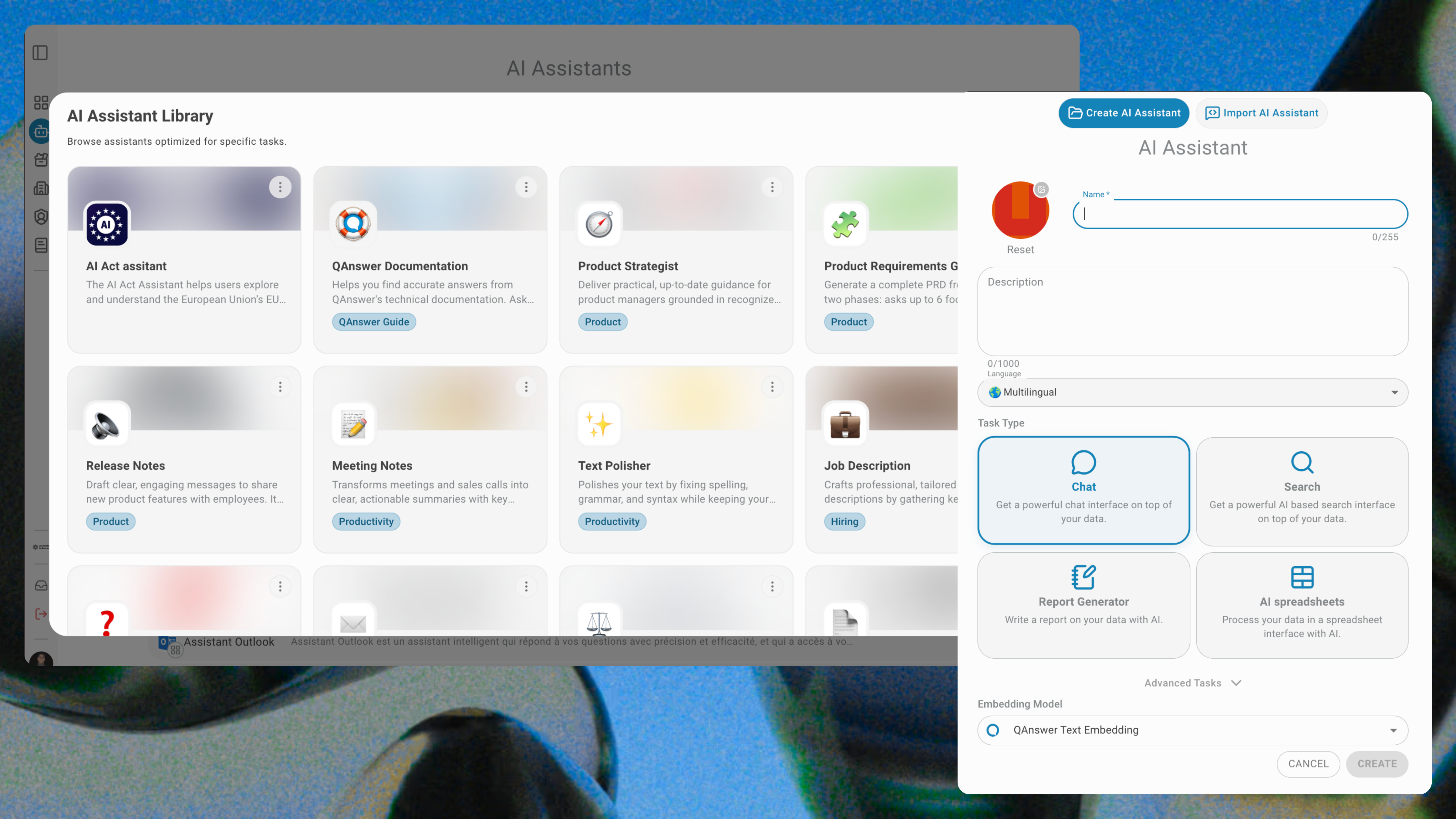

How QAnswer Delivers Accurate Generative AI Customer Service

Most generative AI customer service deployments fail for one reason: the AI is disconnected from the organisation's actual knowledge. QAnswer solves this by combining state-of-the-art LLMs with your proprietary data — building a secure, sovereign AI assistant that answers from verified internal content and cites its sources.

- Retrieval-augmented generation (RAG) — Every answer is composed by retrieving the most relevant passages from your knowledge base, then generating a concise, accurate response. No hallucination.

- Source citations — Every response includes a link to the source document, so customers and agents can verify the answer in one click.

- Sovereign deployment — QAnswer runs entirely within your infrastructure. Customer data and internal knowledge never reach a third-party LLM provider. ISO 27001 certified.

- 100+ data connectors — Connect SharePoint, Confluence, Zendesk knowledge base, SQL databases, REST APIs, and more. QAnswer stays current as your documentation changes.

- Multi-channel deployment — One knowledge base powers your website AI specialist, Microsoft Teams integration, WhatsApp bot, and API — no duplicated configuration.

Conclusion

Generative AI in customer service is not a pilot project anymore — it is core infrastructure for organisations that want to remain competitive. The teams winning in 2026 are those that have deployed generative AI grounded in their own knowledge, with clear escalation paths and robust privacy controls.

Ready to build a generative AI customer service operation you can trust? Talk to the QAnswer team and discover how quickly you can deploy a grounded, secure AI assistant for your support function.

Back to Blog

The AI platform that works.

Try for free today